What are Quality Assurance Tester metrics? Finding the right Quality Assurance Tester metrics can be daunting, especially when you're busy working on your day-to-day tasks. This is why we've curated a list of examples for your inspiration.

You can copy these examples into your preferred app, or alternatively, use Tability to stay accountable.

Find Quality Assurance Tester metrics with AI While we have some examples available, it's likely that you'll have specific scenarios that aren't covered here. You can use our free AI metrics generator below to generate your own strategies.

Examples of Quality Assurance Tester metrics and KPIs 1. Defect Detection Rate The percentage of defects found by the QA team compared to the total defects found post-development

What good looks like for this metric: 80% or higher

Ideas to improve this metric Increase test coverage Enhance tester training programmes Implement automated testing tools Conduct regular code reviews Encourage collaboration between developers and testers 2. Time to Detected Defects Fix Average time it takes to fix defects once they are detected

What good looks like for this metric: 24 to 48 hours

Ideas to improve this metric Prioritise defect fixing in sprint planning Improve communication between QA and developers Automate regression testing Use defect tracking tools Provide comprehensive defect reports 3. Test Coverage Percentage of code or functionalities tested by the QA team

What good looks like for this metric: 95% or higher

Ideas to improve this metric Automate repeated test cases Perform regular gap analysis Involve QA early in the development process Invest in robust testing tools Schedule frequent test plan reviews 4. Technical Debt Identified Amount of potential rework or improvements identified that could prevent future defects

What good looks like for this metric: Issue-free code

Ideas to improve this metric Regularly refactor code Use static code analysis tools Document known tech debt Incorporate tech debt discussion in retrospectives Allocate time for tech debt resolution 5. Customer-reported Defects Number of defects reported by customers after release compared to defects found internally

What good looks like for this metric: Less than 10% of total defects

Ideas to improve this metric Conduct thorough user acceptance testing Enhance early-stage testing processes Foster a customer feedback loop Strengthen pre-release testing cycles Improve real-time monitoring post-release

← →

1. Defect Density Defect density measures the number of defects confirmed in the software during a specific period of development divided by the size of the software.

What good looks like for this metric: Less than 1 defect per 1,000 lines of code

Ideas to improve this metric Implement peer code reviews Conduct regular testing phases Adopt test-driven development Use static code analysis tools Enhance developer training programmes 2. Code Coverage Code coverage is the percentage of your code which is tested by automated tests.

What good looks like for this metric: 80% - 90%

Ideas to improve this metric Review untested code sections Invest in automated testing tools Aim for high test case quality Integrate continuous integration practices Regularly refactor and simplify code 3. Cycle Time Cycle time measures the time from when work begins on a feature until it's released to production.

What good looks like for this metric: 1 - 5 days

Ideas to improve this metric Streamline build processes Improve collaboration tools Enhance team communication rituals Limit work in progress (WIP) Automate repetitive tasks 4. Technical Debt Technical debt represents the implied cost of future rework caused by choosing an easy solution now instead of a better approach.

What good looks like for this metric: Under 5% of total project cost

Ideas to improve this metric Regularly refactor existing code Set priority levels for debt reduction Maintain comprehensive documentation Conduct technical debt assessments Encourage practices to avoid accumulating debt 5. Customer Satisfaction Customer satisfaction measures the level of contentment clients feel with the software, often gauged through surveys.

What good looks like for this metric: Above 80% satisfaction rate

Ideas to improve this metric Gather feedback through surveys Implement a user-centric design approach Enhance customer support services Ensure frequent updates and improvements Analyse and respond to customer complaints

← →

1. Feature Implementation Ratio The ratio of implemented features to planned features.

What good looks like for this metric: 80-90%

Ideas to improve this metric Prioritise features based on user impact Allocate dedicated resources for feature development Conduct regular progress reviews Utilise agile methodologies for iteration Ensure clear feature specifications 2. User Acceptance Test Pass Rate Percentage of features passing user acceptance testing.

What good looks like for this metric: 95%+

Ideas to improve this metric Enhance test case design Involve users early in the testing process Provide comprehensive user training Utilise automated testing tools Identify and fix defects promptly 3. Bug Resolution Time Average time taken to resolve bugs during feature development.

What good looks like for this metric: 24-48 hours

Ideas to improve this metric Implement a robust issue tracking system Prioritise critical bugs Conduct regular team stand-ups Improve cross-functional collaboration Establish a swift feedback loop 4. Code Quality Index Assessment of code quality using a standard index or score.

What good looks like for this metric: 75-85%

Ideas to improve this metric Conduct regular code reviews Utilise static code analysis tools Refactor code periodically Strictly adhere to coding standards Invest in developer training 5. Feature Usage Frequency Frequency at which newly implemented features are used.

What good looks like for this metric: 70%+ usage of released features

Ideas to improve this metric Enhance user interface design Provide user guides or tutorials Gather user feedback on new features Offer feature usage incentives Regularly monitor usage statistics

← →

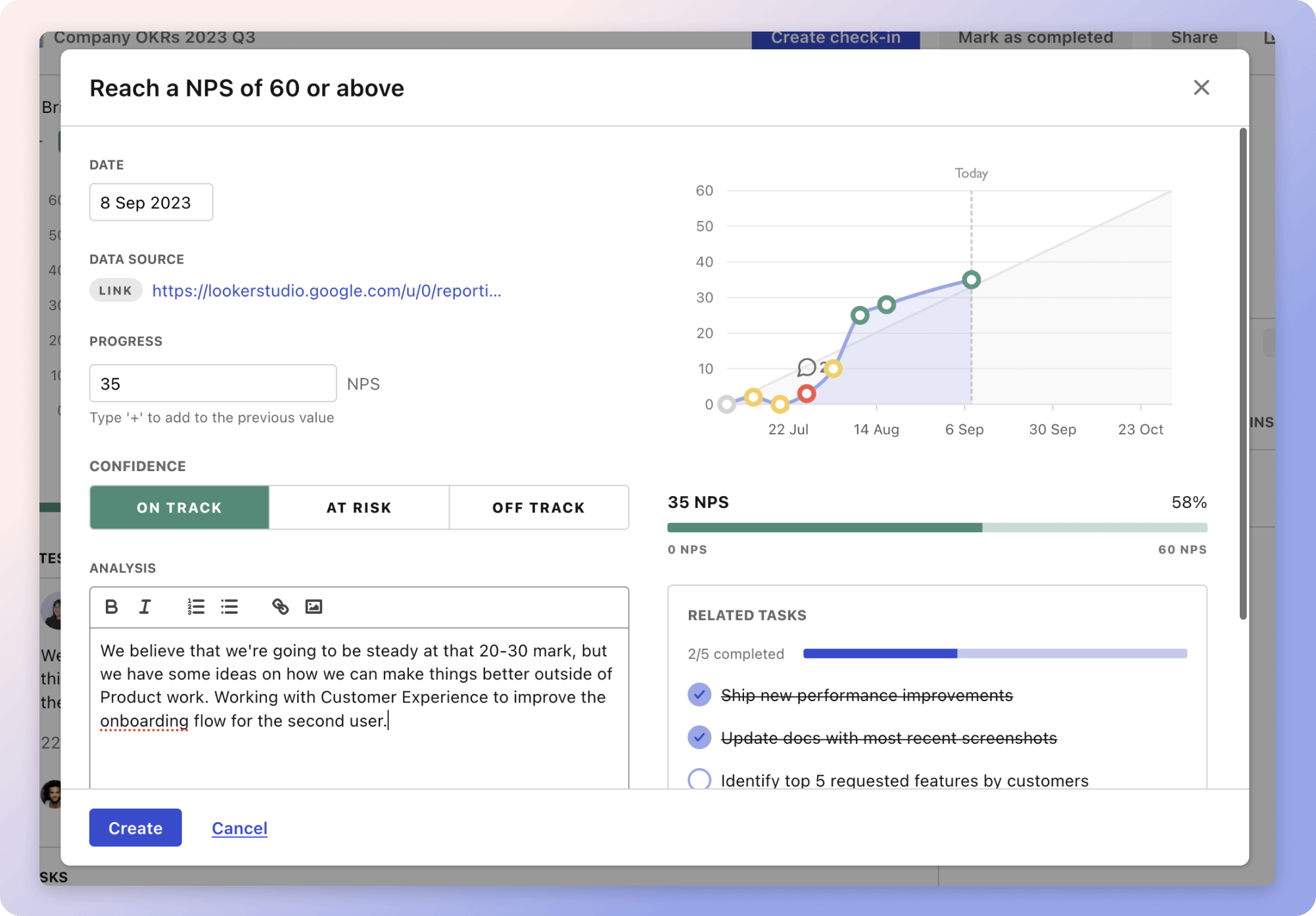

Tracking your Quality Assurance Tester metrics Having a plan is one thing, sticking to it is another.

Don't fall into the set-and-forget trap. It is important to adopt a weekly check-in process to keep your strategy agile – otherwise this is nothing more than a reporting exercise.

A tool like Tability can also help you by combining AI and goal-setting to keep you on track.

More metrics recently published We have more examples to help you below.

Planning resources OKRs are a great way to translate strategies into measurable goals. Here are a list of resources to help you adopt the OKR framework:

Tability's check-ins will save you hours and increase transparency

Tability's check-ins will save you hours and increase transparencyThe best metrics for Increase Calls Through Google My Business

The best metrics for GRC MSSP Compliance

The best metrics for Reduce Courier Costs

The best metrics for Reducing Courier Costs

The best metrics for Operations Management Success

The best metrics for Ensuring sticker quality