The strategy titled "Maximising AI use in deepfake detection with deep learning" aims to improve the detection of deepfake images and videos using deep learning techniques within the cybersecurity sector. One core aspect is developing a comprehensive deepfake dataset by collecting and curating diverse materials and collaborating with institutions to expand the dataset's scope. This dataset is essential for training models that differentiate between real and fake content.

Additionally, implementing advanced deep learning models enhances detection accuracy. This involves researching new architectures, utilizing transfer learning, and incorporating multi-modal approaches. Applying explainability techniques helps interpret model decisions, essential for trust in automated systems.

Lastly, the strategy focuses on integrating AI models within existing cybersecurity infrastructure. This includes identifying key platforms, creating user-friendly interfaces, and setting up real-time monitoring systems. Training sessions for cybersecurity teams and maintaining compliance with industry standards are critical to ensuring effective deployment.

The strategies

⛳️ Strategy 1: Develop a comprehensive deepfake dataset

- Collect diverse real and deepfake images and videos from various sources

- Annotate and label the dataset accurately to distinguish between real and deepfake content

- Ensure the dataset includes a wide range of scenarios and contexts

- Regularly update the dataset with new deepfake technologies and methods

- Collaborate with other researchers and institutions to expand the dataset

- Make the dataset publicly available for research purposes with proper usage guidelines

- Implement strong data privacy and security measures for dataset handling

- Use automated tools to aid in dataset curation and management

- Conduct quality checks to maintain the integrity of the dataset

- Engage the community to crowdsource and validate dataset additions

⛳️ Strategy 2: Implement advanced deep learning models

- Research the latest deep learning architectures suitable for deepfake detection

- Develop and test new models for high accuracy in differentiating deepfakes

- Utilise transfer learning from pre-trained models on related tasks

- Incorporate multi-modal deep learning approaches for improved results

- Optimize model parameters for performance and speed

- Integrate explainability techniques within models to interpret decisions

- Collaborate with experts in AI and cybersecurity for model improvements

- Deploy models in testing environments to evaluate real-world effectiveness

- Continuously refine models based on feedback and new data

- Share model findings with the broader cybersecurity community for peer review

⛳️ Strategy 3: Enhance deployment and integration in cybersecurity systems

- Identify key platforms and systems where deepfake detection is critical

- Integrate AI models with existing cybersecurity infrastructure

- Develop user-friendly interfaces for seamless use by cybersecurity analysts

- Implement real-time monitoring and alert systems for detected deepfakes

- Conduct training sessions for cybersecurity teams on using AI tools

- Establish protocols for handling and responding to detected deepfakes

- Collaborate with stakeholders to ensure compliance with industry standards

- Regularly test and validate the integration effectiveness within systems

- Gather metrics on deployment impact to quantify success

- Create a roadmap for future AI enhancements in security applications

Bringing accountability to your strategy

It's one thing to have a plan, it's another to stick to it. We hope that the examples above will help you get started with your own strategy, but we also know that it's easy to get lost in the day-to-day effort.

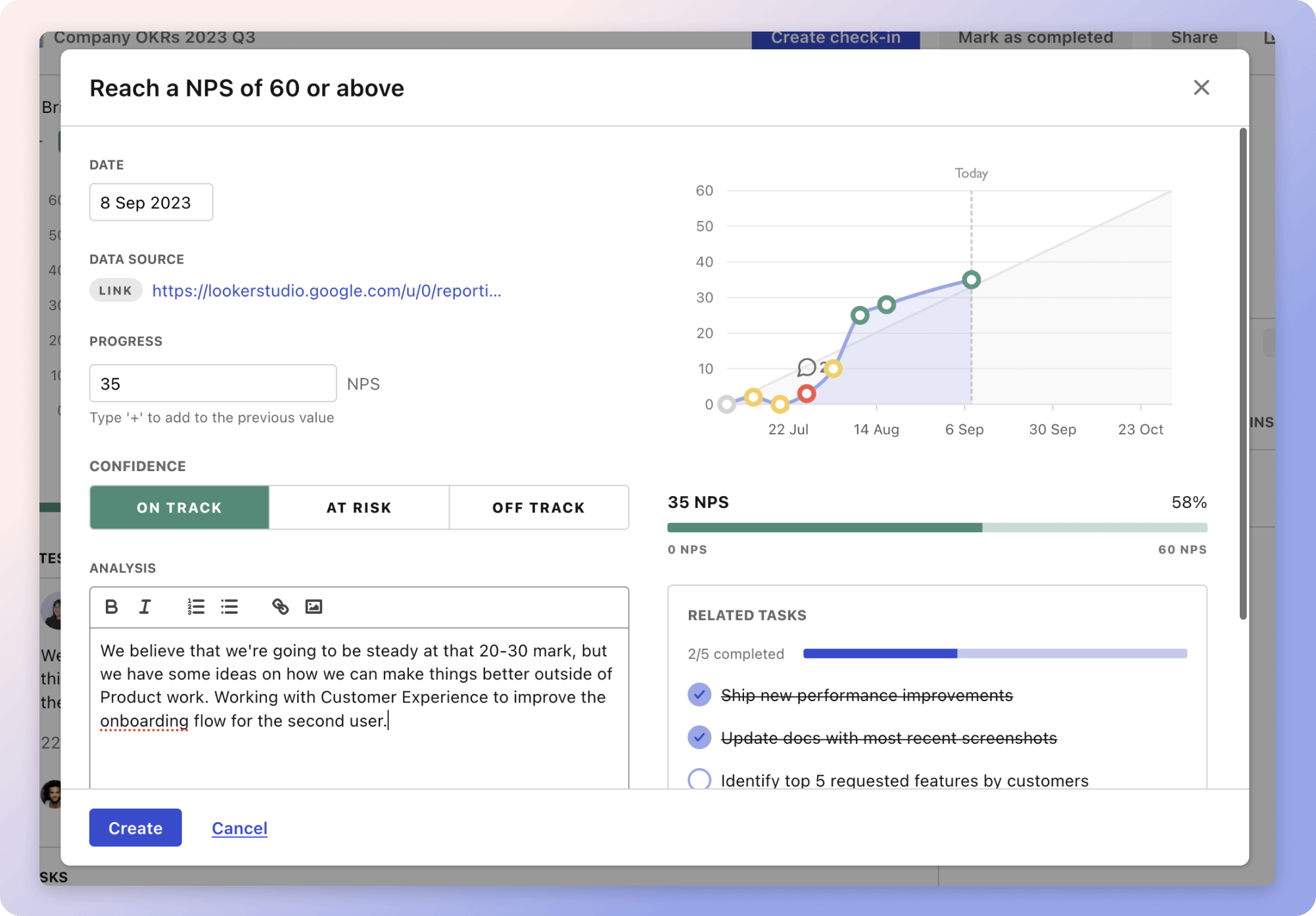

That's why we built Tability: to help you track your progress, keep your team aligned, and make sure you're always moving in the right direction.

Give it a try and see how it can help you bring accountability to your strategy.